Early this year, after a 45-minute call with one of our fund clients, I sat down to process the notes.

The call had touched 18 open tasks across three workstreams—data delivery timelines, outstanding fund documentation for five portfolio companies, and a decision on cashflow integration that needed to be deferred rather than left to drift silently.

It took me another 40 minutes to manually triage every item, match it to existing tasks, create new ones, and write the summary that would keep three different colleagues aligned on what was still outstanding.

By the time I was done, the next meeting had started

What you'll take away from this

The bottleneck for senior operators using AI is not model intelligence—it's often the absence of persistent, structured context.

Every professional managing across functions is doing expensive manual work that AI could handle if it knew what you know.

The gap between what AI tools promise and what they deliver is a context problem, not a capability problem.

The background

I run data, research and operations at a financial intelligence company focused on African private capital markets. Two functions report to me and I support others.

On any given week, I'm in calls with clients, reviewing data architecture decisions, troubleshooting tooling that's crashing under concurrent use, training the team and trying to keep quarterly OKRs from falling behind.

I am not a casual AI user. I have a graduate degree in computer science from Oxford. I've built automation workflows, prototyped RAG pipelines for entity resolution, and connected Claude directly to internal systems through MCP.

I am the person this technology should work best for.

But wasn't working.

The blank room problem

The problem was not the AI.

The problem was that every time I opened a new conversation, I started from zero. ChatGPT didn't know what current problems I dealt with as a COO. It didn't know my direct reports, my OKRs, my stakeholders, my decision history, or the fact that I'd already discussed our database migration three times this month.

Karpathy describes what LLMs are missing: a persistent scratchpad, the ability to write knowledge for other instances, a growing repertoire of accumulated understanding. He asks, "Why can't an LLM write a book for the other LLMs?"

Every session was a blank room.

So I did what most people do. I copy-pasted. I maintained a Google Doc of "things AI should know about me" and dropped it into the system prompt. I later built a Claude Project with workspace IDs, team rosters, and OKR references loaded as knowledge files. I set up task management integrations. I created databases for meeting notes.

Each piece helped. None of them talked to each other.

But what was the seed?

This is the part where I'm supposed to tell you the idea arrived fully formed.

It didn't.

It started with a request I made to Claude: I wanted a better way to dump notes, call summaries, and raw thoughts into a conversation and have it update priorities and tasks on a board. Nothing was set up. No clear structure for how to do it.

That request turned into a project. The project turned into an operating system. The operating system turned into the thing I'm now building as a product.

Because the pain points were structural, not cosmetic.

What broke

Information vanished after every conversation. Call notes got processed into task updates, but the notes themselves disappeared into chat history. Before my next call with a client or a direct report, I had no searchable record of what was discussed last time. I'd re-explain context, miss threads, or make commitments that contradicted ones I'd already made.

The AI helped me process the meeting. It did nothing to help me remember it.

Meeting-heavy weeks killed task progress. I'd commit to actions across five calls on Monday and Tuesday, and by Friday none of them had moved because the rest of the week was also calls. No system was forcing a reconciliation between what I committed to and what actually moved.

The gap between intention and execution sat in my head, which is the worst possible database.

No single source of truth across my functions. I oversee multiple teams, multiple direct reports, clients, and OKRs. Tasks lived in my head, action items were scattered across Google Docs, WhatsApp messages, Apple Notes, and verbal commitments. Nothing was linked to OKRs. Nothing was searchable.

I was a 30-person company's operating memory. That memory was unreliable.

Context transfer was manual and expensive. Every time I opened a new ChatGPT/Claude conversation, I re-pasted the same context. Workspace IDs. Team roster. Templates. OKR references. The models are getting smarter every quarter, but the context that makes them useful for my specific job was still copy-paste, every single time.

Claude's memory system helped with surface-level facts—my name, my role. It did not help with the structured, evolving knowledge that actually drives decisions.

Decisions had no persistent home. They existed in chat transcripts, Google Docs, and project knowledge files. None of it was searchable. None of it was linked to tasks. When someone asked about quarterly progress, I had to reconstruct it from memory and scattered documents rather than pull from a living record.

What I built

I want to be specific about what I built to fix this, because the distinction matters.

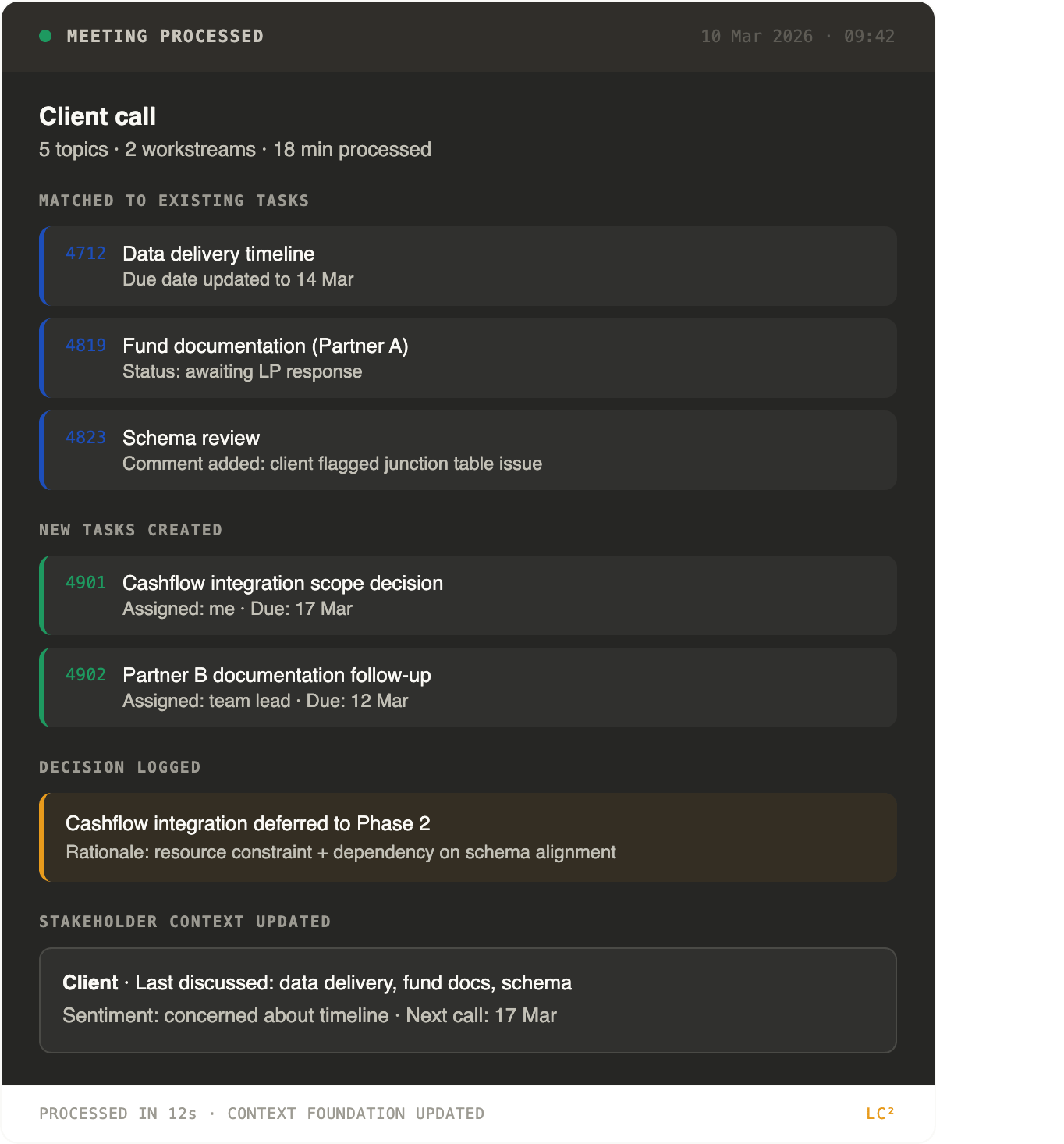

After a Monday morning call that covered five topics across two workstreams, I paste the raw notes into Claude. The system parses them, matches action items to existing tasks, creates new ones where needed, logs the meeting summary to a database with stakeholder tags, and returns a structured report.

Here's a sample output:

That output used to take me 40 minutes of manual triage. It now takes the length of time it takes to paste notes into a chat window.

The critical thing is not the time saved. It's that every meeting I process adds to a structured record: stakeholder context, decision history and task linkage that makes the next interaction better.

Monday's call notes inform Wednesday's preparation. This week's decisions feed next month's review.

The context compounds.

Who this is for

This article is for a specific person.

You're a senior professional: an ops lead, a consultant, maybe you work in finance/law, produce a lot of documents—and you manage across functions. You use AI tools. You've tried Custom GPTs, Claude Projects, maybe even built a prompt library.

Each one helped a bit. None of them stuck.

You have the same feeling I had: the technology is clearly capable of more, but you can't make it do the thing you need because it doesn't know enough about your specific work.

You are not wrong. And it's not your fault.

The real problem is AI tools are designed to be generally intelligent for everyone, which means they are specifically intelligent for no one.

ChatGPT's memory caps at roughly 1,200 words of explicit facts—it fills up quickly and breaks when full. Claude Projects are isolated from each other—a Work project cannot access a Personal project. Custom GPTs have retrieval that's superficially compelling but substantively unreliable.

These are features. What you need is a system.

A system that stores your professional context i.e. your role, your stakeholders, your decision patterns, your domain knowledge etc. in a structured format that you own. A system that sits between you and your AI tools, so the context is portable: it works in Claude today, ChatGPT tomorrow, and whatever comes next.

A system where the byproduct of your daily work—processing a call, triaging your morning, reviewing your week—is the input that makes the system smarter.

The thesis

That's what Learned Context is. Not another productivity tool. Not a prompt library. Not a course on how to talk to chatbots.

A living context layer that compounds.

I don't know yet if this becomes a company or a small, focused membership that serves a few hundred professionals exceptionally well. What I know is that the problem is real, because I lived it. What I know is that the architecture works, because I built it for myself first.

And what I know is that nobody is building this for non-technical operators—the people who actually need it most.

The tools are powerful. The context is missing.

That's the gap.